Cambridge University To Establish A Center For Terminator Studies

This article is more than 2 years old

We all know that big movies have a wide-ranging cultural impact. From everyone and their uncles quoting Pulp Fiction, to all of the “Philosophy of The Matrix” classes that popped up after the Wachowskis’ 1999 sci-fi hit. But now we’re about to have a center for so-called “Terminator studies.”

Cambridge University is establishing the facility to allow researchers to examine and explore the threat that advances in robots and robotics pose to the human race. Robots aren’t the only things that will be studied at the Centre for the Study of Existential Risk (CSER). Scientists will also dig into various other dangers to continued human survival, including “artificial intelligence, climate change, nuclear war, and rogue biotechnology.”

Basically they’re going to study a bunch of threats that fuel a large portion of science fiction films, shows, and books. I went into the wrong field of study, this sounds way more fun than any classes that fill my transcripts.

Renowned philosopher, Cambridge professor, and one of the center’s three founders, Bertrand Russell, warns that “ultra-intelligent machines” and “artificial general intelligence (AGI),” could pose a number of potential threats to humanity. He says, “Nature didn’t anticipate us, and we in our turn shouldn’t take AGI for granted. We need to take seriously the possibility that there might be a ‘Pandora’s box’ moment with AGI that, if missed, could be disastrous.”

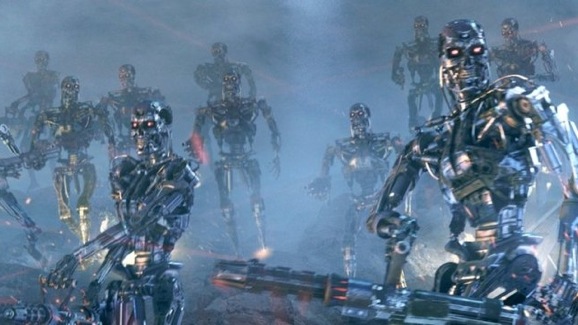

We’ve all seen the Terminator movies, and we know that what Skynet has in store for us. We’re all going to wind up in an underground bunker, engaged in a daily battle for survival. At least if the movies we all love are to be believed.

Russell elaborates on the potential hazards and pitfalls of artificial intelligence:

The basic philosophy is that we should be taking seriously the fact that we are getting to the point where our technologies have the potential to threaten our own existence—in a way that they simply haven’t up to now, in human history.