The United Nations Takes A Stance Against Killer Robots

This article is more than 2 years old

Hopefully films like Pacific Rim will remain limited to the realm of science fiction, because if giant monsters ever did come through a rift beneath our oceans, the amount of political and bureaucratic bullshit that would have to happen before any Giant Freakin’ Robots got built would almost be a bigger headache than the monster threat itself.

Hopefully films like Pacific Rim will remain limited to the realm of science fiction, because if giant monsters ever did come through a rift beneath our oceans, the amount of political and bureaucratic bullshit that would have to happen before any Giant Freakin’ Robots got built would almost be a bigger headache than the monster threat itself.

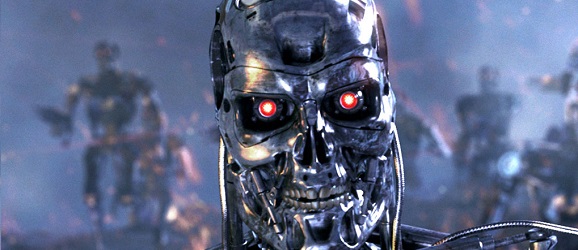

But while banning enormous, trans-dimensional monsters might seem arbitrary in our current times, getting rid of killer robots of any size has become a major issue for world leaders. The recently formed Stop the Killer Robot campaign has found its biggest sponsor in the United Nations, which joined the fight last week in the form of a draft report for the U.N. Human Rights Commission, which will almost certainly take up a lot of talking time during the Human Rights Council meeting in Geneva, Switzerland on May 29th.

The report’s author, South African human rights law professor Christof Heyns, is straight up calling for a global moratorium on the “testing, production, assembly, transfer, acquisition, deployment and use” of lethal autonomous robotics (LARs). He isn’t solely worried about attacks from drones either, since those are controlled by humans more often than not.

He specifically brings up several examples of fully or semi-automatic weapons such as the U.S. Phalanx system used on Aegis-class cruisers, which automatically detects, tracks, and engages attacks using anti-ship missiles and aircraft. Britain’s Taranis combat drone autonomously finds and identifies enemies and can defend itself, though mission command has to actually send the call to interact with the target. Israel’s Harpy is an autonomous system that detects and destroys radar emitters in the area. I don’t know about you guys, but I’m perfectly happy with these examples including no actual robots that autonomously kill people. It’s always better to nip things like that in the bud before they come out of the iron woodwork.

Heyns is dedicated to humanity’s safety, warning that killer bots…

…will not be susceptible to some of the human shortcomings that may undermine the protection of life. Typically they would not act out of revenge, panic, anger, spite, prejudice or fear. Moreover, unless specifically programmed to do so, robots would not cause intentional suffering on civilian populations, for example through torture.

He then adds the incredibly disturbing line, “Robots also do not rape.”

Having a small degree of human interaction doesn’t fix the problem either, as…

…the power to override may in reality be limited because the decision-making processes of robots are often measured in nanoseconds and the informational basis of those decisions may not be practically accessible to the supervisor. In such circumstances humans are de facto out of the loop and the machines thus effectively constitute LARs.

It’s obvious that this world is far too old to assume that everybody can just get along. But can’t we all just not get along without involving malicious robots?