This Autonomous Robot Creates Interpretive Works Of Art

This article is more than 2 years old

One of the abilities that distinguishes humans from animals is the ability to create art. Sure, animals do some cool stuff, but there’s usually a practical reason, rather than an aesthetic one (with the exception of the Kraken). Until fairly recently, humans figured that the desire and ability to create art separated us from robots too, but robotic musicians and other art-generating robots call this once-unique ability into question. Still, most of those robots are programmed to play, and it’s not as though they’re mechanical Beethovens, applying what they’ve learned about musical theory to their own skills to create unique scores. Recently, artist and roboticist Patrick Tresset decided to create a robot that can autonomously create artwork inspired by its own interpretation of its environment.

One of the abilities that distinguishes humans from animals is the ability to create art. Sure, animals do some cool stuff, but there’s usually a practical reason, rather than an aesthetic one (with the exception of the Kraken). Until fairly recently, humans figured that the desire and ability to create art separated us from robots too, but robotic musicians and other art-generating robots call this once-unique ability into question. Still, most of those robots are programmed to play, and it’s not as though they’re mechanical Beethovens, applying what they’ve learned about musical theory to their own skills to create unique scores. Recently, artist and roboticist Patrick Tresset decided to create a robot that can autonomously create artwork inspired by its own interpretation of its environment.

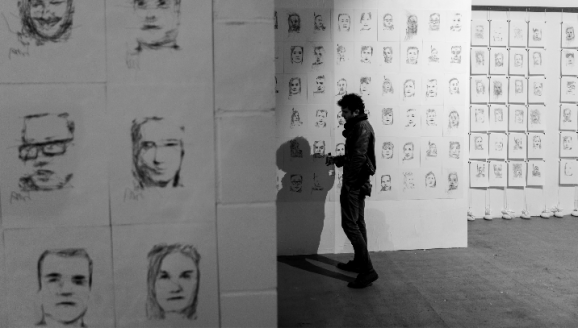

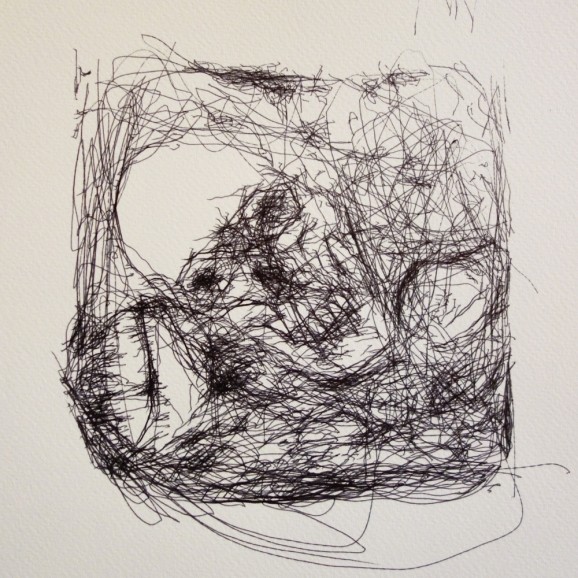

Tresset has been making robots for a while—namely, upgraded versions of a robot he calls Paul, which he calls a “creative prosthetic,” originally designed when he had a terrible case of painter’s block. A few years ago he created Paul and Pete (I have to wonder if there’s a Mary on the way), robots that sketched human faces using facial recognition technology and showed off their stuff at London’s Tenderpixel gallery. Now, many iterations later, Tresset has developed Paul-IX in an attempt to explore the question of whether robots can autonomously create “artifacts that stand as artworks”—specifically, artworks that comment on the human condition.

Given that humans are capable of empathizing with robots, it’s worth exploring whether the opposite is true. Of course, without sentience perhaps this experiment is a bit limited, or even arbitrary, but anything that encourages humans to look at themselves and the world from another vantage point—even if it’s that of a non-sentient robot—is a worthwhile endeavor. Attempts to understand other points of view generally are.

Given that humans are capable of empathizing with robots, it’s worth exploring whether the opposite is true. Of course, without sentience perhaps this experiment is a bit limited, or even arbitrary, but anything that encourages humans to look at themselves and the world from another vantage point—even if it’s that of a non-sentient robot—is a worthwhile endeavor. Attempts to understand other points of view generally are.