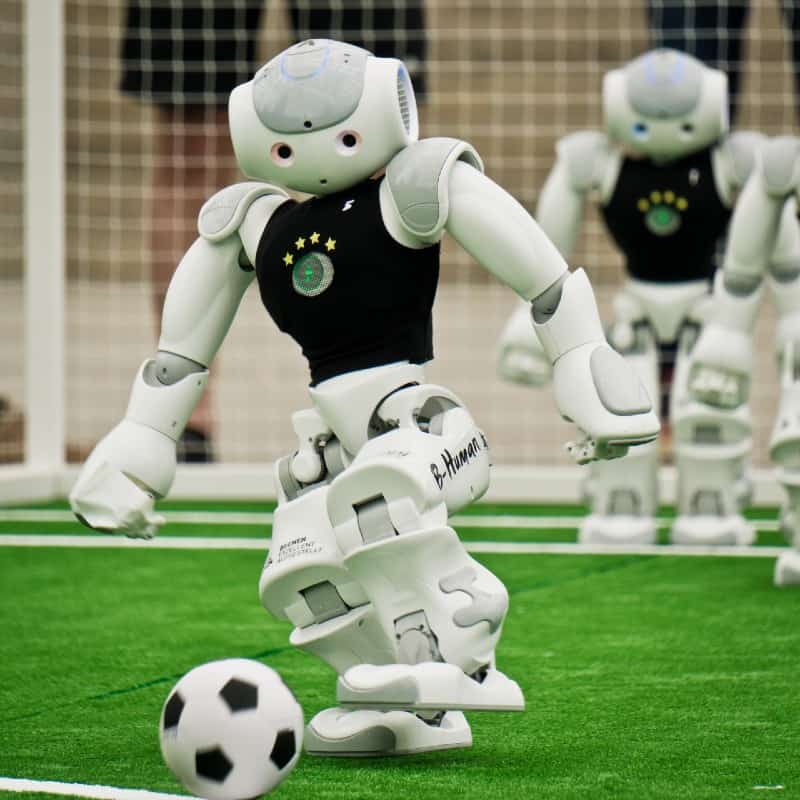

Watch Robots Learning To Play Soccer In An Adorable Yet Oddly Creepy Video

Google's DeepMind AI Lab trained robots to play soccer.

Google’s Bard AI may have had some learning difficulties when it was first revealed to the public, but another department, the DeepMind AI Lab, has had a significant breakthrough in machine learning. Robots may be able to join the NYPD, and they’re getting to be more humanoid, but they’re also learning to replace our greatest athletes on the pitch. As part of their experimentation with Deep Reinforcement Learning (Deep RL), a pair of adorable little machines learned to play soccer.

These robots aren’t behaving according to specific parameters inputted by human engineers; rather, they are adjusting, on the fly, to the angle and location of the ball. Deep RL algorithms let the robots operate without the need for constant inputs or pre-programmed conditions. This learning style isn’t new, as in 2013, DeepMind set up a program to play Atari games. More recently, it was used to let a robot solve a Rubik’s Cube.

Part of the Deep RL programming also trained the robots on standing up, turning around, and running after the ball. In the video, the two cute robots can be seen flailing around, but as it continues, they become more and more sure of their actions as the learning algorithms kick in. Much as with Everton, dribbling the ball is still too difficult for them.

Despite the one setback, which even World Cup teams struggle with from time to time, the experiment was a resounding success for the research team. Restricting themselves to an open-source robot design, rather than building one from scratch as Boston Dynamics does, they proved million-dollar hardware is no longer required for results once restricted to sci-fi movies.

Disney went in a different direction with their recent display of emotionally intelligent robots. The AI powering the Disney contraptions isn’t designed for constant learning but rather to connect emotionally with humans. What’s going to be truly dangerous, and might potentially bring about the Singularity, is when Deep RL is combined with emotional intelligence.

Soccer-playing robots already exist, and they have their own tournament, the RoboCup. Google’s two pint-sized ballers differ from the tournament competitors because they weren’t designed specifically to compete in soccer. They had to learn through trial and error, with frequent rewards for good behavior. Google hasn’t explained yet how they accomplished the positive rewards for robots, but if cartoons taught us anything, it was likely sprockets.

Where the field of AI goes from here is anyone’s guess, as the technology, boosted by ChatGPT’s release late last year, is rapidly growing exponentially on what feels like a daily basis. Adorable soccer-playing robots are one thing, but machines that learn and adjust in real-time when they are picking up garbage, driving a car, or waging war on a battlefield are completely different.

For now, we appear to be safe from the robot revolution, as Deep RL put to its most powerful extent is still severely limited. Yet Chat-GPT 3 had clear limits, and the recent Chat-GPT 4 upgrade was significantly more powerful, so in five years, will we all be watching the robots dominate Blernsball?